The most powerful AI on Earth runs on hardware that’s doing nothing more than flipping switches between off and on. Here’s how that gets you to poetry.

Every AI breakthrough in history runs on a machine that knows two things. Zero. And one. That’s the entire vocabulary. The systems writing poetry and generating video and passing medical exams — all of them, underneath everything, are flipping switches between off and on, billions of times a second.

And somehow that’s enough. Today we’re going to understand why.

Two digits is all you need

Binary is a number system with two digits: zero and one. That’s what “bi” means. Our normal system uses ten digits (decimal — deci means ten). Binary uses two.

Why? Because it maps perfectly onto the simplest thing a machine can do: be on or be off. Think about a light switch. Two states. Up or down. On or off. One or zero. A computer is, at its most basic level, billions of tiny switches. Each one stores exactly one bit of information. One binary digit.

You might wonder why not use switches with ten positions, so we could use regular numbers. The answer is boring and practical: reliability. Distinguishing between ten different voltage levels at high speed is genuinely hard. Distinguishing between “on” and “off”? Easy. Even with noise in the signal, even with voltage fluctuations, the machine can tell the difference. Two states gives you maximum reliability at maximum speed. Good tradeoff.

But here’s where it gets interesting. One switch alone is useless. Two switches give you four combinations (00, 01, 10, 11). Three give you eight. Eight switches — called a byte — give you 256 combinations. That’s enough for every letter in English, uppercase and lowercase, plus numbers, punctuation, and a bunch of special characters. The system that does this mapping is called ASCII, standardized in the 1960s. The letter A is 65. In binary: 01000001. Eight switches, one byte, one letter.

And it’s not just letters. Everything in a computer is stored this way. Numbers. Colors. Sounds. The location of your finger on a touchscreen. It all gets converted into sequences of zeros and ones. Because that’s all the machine has.

Logic gates: the world’s smallest decisions

Speaking a language isn’t the same as thinking. You need to do something with those zeros and ones. You need to make decisions. That’s where logic gates come in.

A logic gate is absurdly simple. One or two binary inputs in, one binary output out, based on a fixed rule. And there are only a handful of rules.

NOT takes one input and flips it. Give it a 1, get a 0. Give it a 0, get a 1.

AND takes two inputs. Both have to be 1 for the output to be 1. Otherwise, 0. It’s like two light switches wired in series — both need to be on for the light to work.

OR takes two inputs. If either is 1, the output is 1. Only way you get 0 is if both inputs are 0.

That’s basically the whole toolkit. NOT, AND, OR. A couple variations exist (NAND, XOR), but those three are the foundation. And here’s the part that might surprise you: you can build everything a computer does out of those three operations. Every calculation. Every comparison. Every decision. All of it — combinations of NOT, AND, and OR, arranged in clever patterns.

Addition? Two logic gates wired together (an XOR for the sum, an AND for the carry) and you’ve got a half adder. Chain 6 or 8 of those together and you can add numbers in the billions. You just built a calculator out of nothing but yes/no questions. Nobody taught the circuit math. The math emerges from the logic.

The 21-year-old who made it click

The person who realized that true/false logic and electrical circuits were the same thing was Claude Shannon, a 21-year-old grad student at MIT. In 1937, Shannon wrote what many people consider the most important master’s thesis of the twentieth century. He showed that George Boole’s mathematical logic from 1854 — a system of true/false algebra that had been a purely abstract curiosity for 80 years — mapped perfectly onto electrical switches. True equals on. False equals off. AND, OR, NOT become circuits.

And here’s the thing that gets me. Boole’s algebra was considered useless when he published it. Abstract math with no practical application. Nobody, including Boole himself, imagined it would become the operating system for every machine on the planet. Eighty years of “useless” theory, and then one grad student saw the connection. It’s like finding out that some weird doodle you drew on a napkin in college turned out to be the blueprint for a skyscraper.

The CPU: fetch, decode, execute, repeat forever

Binary is the language. Logic gates make decisions. But you need a boss — something to orchestrate it all. That’s the CPU, the Central Processing Unit.

A CPU does three things, over and over, billions of times per second. Fetch the next instruction from memory (think of memory as a massive bookshelf, with the CPU’s bookmark telling it which page to read next). Decode the instruction — figure out what it’s asking (“add these two numbers,” “compare this to that,” “move this data”). Execute the instruction — do the thing. Then move the bookmark forward and do it all again.

Fetch, decode, execute. Billions of times per second. Like an incredibly fast, incredibly obedient assembly-line worker who never gets bored, never makes a mistake, never asks why. Just grabs the next work order, does it, grabs the next one.

A modern CPU runs at 3 to 5 gigahertz. One gigahertz = one billion cycles per second. To put that in perspective: if you could do one task per second, it would take you roughly 32 years to do what a CPU does in a single second.

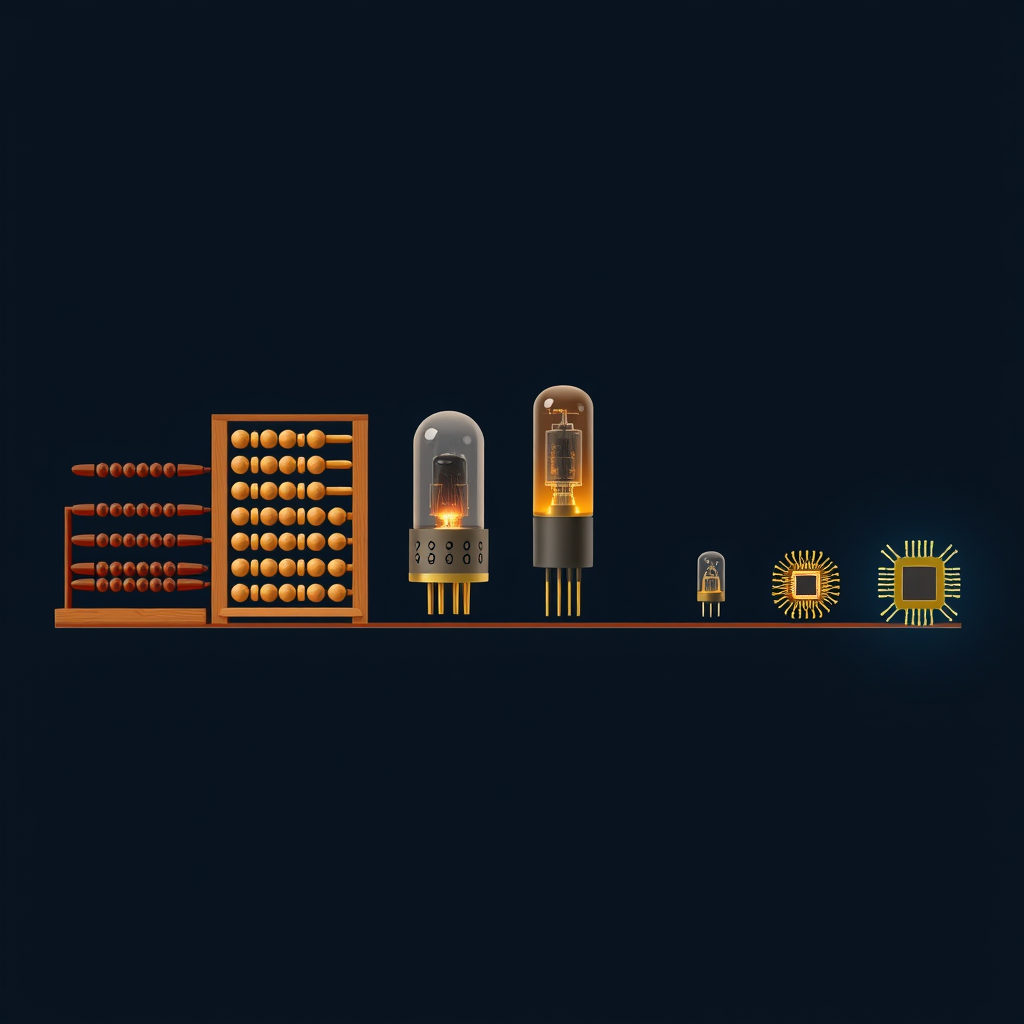

From 18,000 tubes to 100 billion transistors

The speed comes from transistors — tiny electronic switches. The first electronic computer, ENIAC, built in 1945, used about 18,000 vacuum tubes. It weighed 30 tons, filled a room, and could do roughly 5,000 operations per second.

Your phone? Somewhere between 15 and 20 billion transistors. Apple’s latest chips pack over 100 billion onto a piece of silicon smaller than your thumbnail. Those transistors are measured in nanometers — billionths of a meter. A human hair is about 80,000 nanometers wide. The transistors in your phone are 3 to 5.

In 80 years, we went from 18,000 switches that filled a house to 100 billion switches under your fingernail. That’s not an improvement. That’s a different universe. And that scale is a big part of why AI is possible now and wasn’t possible then. The algorithms behind AI need billions of operations per second. ENIAC couldn’t get close. Your phone does it without warming up.

Simple parts, complex behavior

Here’s the key idea, and it’s worth sitting with for a second.

A computer is not magical. It’s a machine built entirely out of simple yes/no switches, organized into logic gates, organized into circuits that do math. A CPU orchestrates all of it at mind-boggling speed.

The reason computers can do so many things isn’t because they’re inherently complex. It’s because they’re inherently fast. When you can do 5 billion simple things per second, you can build extraordinarily complex behavior out of extraordinarily simple parts.

Think about a pointillist painting. Up close, nothing but dots. Individual, tiny, colored dots. Nothing complex about any single one. But step back and you see a sunset, a face, a whole world. The complexity isn’t in the dot. It’s in the pattern. It’s in having millions of dots arranged precisely. A computer works the same way. The individual operation is trivially simple. On or off. But billions of those operations, in the right sequence, at the right speed — that gets you weather simulation, genome sequencing, and machines that understand human language.

Why this matters for AI

When we start talking about neural networks and machine learning in later episodes, it’s easy to think of AI as something fundamentally different from regular computing. Something mystical. It’s not. AI runs on the exact same transistors. Same logic gates. Same fetch-decode-execute cycle.

The difference isn’t the hardware. It’s the software — the instructions. And those instructions tell the machine to do something new: instead of following fixed rules, learn the rules from data.

But the hardware doesn’t care. It just sees zeros and ones, flips its switches a few billion times a second, and does whatever the instructions say. The hardware is profoundly dumb. It’s the instructions that got clever.

And I want to stress this, because I hear the misconception constantly. People talk about AI as if it’s running on some exotic, alien hardware. It’s not. The same kind of chip that runs your spreadsheets runs ChatGPT. There are specialized chips designed to make AI math faster (GPUs, TPUs — we’ll get to those), but they’re still transistors, still binary, still logic gates. There’s no magic metal at the bottom of the stack.

Listen to this episode: [Zeroth: AI, Episode 2 — How Computers Actually Work]

Try the code: [Binary converter and logic gate simulator on Google Colab] — type a number, see it in binary. Click buttons to run AND, OR, NOT gates yourself. No programming experience needed.

Next up: To you, it’s a photo of your dog. To a computer, it’s 12 million numbers. How does a machine turn the real world into something it can work with? That’s Episode 3.

Sources

- Horowitz, P. & Hill, W., The Art of Electronics, 3rd ed., Cambridge University Press, 2015. Discussion of noise margins and reliability advantages of binary signaling.

- American Standards Association, “ASCII — American Standard Code for Information Interchange,” 1963. Standard character encoding using 7-bit binary.

- Mano, M.M. & Ciletti, M.D., Digital Design, 6th ed., Pearson, 2017. Proof that any Boolean function can be implemented with combinations of AND, OR, and NOT gates.

- Shannon, C.E., “A Symbolic Analysis of Relay and Switching Circuits,” master’s thesis, MIT, 1937. Demonstrated the equivalence of Boolean algebra and electrical switching circuits.

- Intel Corporation, processor specifications, 2024-2025. Modern consumer CPUs typically operate at 3-5 GHz base clock frequencies.

- U.S. Army Research Laboratory / University of Pennsylvania, “ENIAC,” 1945. Used 17,468 vacuum tubes, weighed approximately 30 tons, performed ~5,000 additions per second.

- Apple Inc., “Apple M4 Ultra chip,” press release, 2025. Contains over 100 billion transistors manufactured on TSMC’s advanced process node.